by Krückeberg Lena, Müller Isabella, Peycheva Reneta, Ried Raphaela, Schmid Denise, Urban Pebia and

Zografova Eliza

INTRODUCTION

Over the last decades social protection programs have become increasingly computerised, making the digitalization of personal data collection an integral part of the welfare systems.[1] Those technologies are making it possible to collect and verify large amounts of data in a short time, thus making the whole procedure easier. And as we know all social programs have some “legibility” scheme in order to check the eligibility of the beneficiaries and make them readable to the state.[2] But as we have discovered in our research – social programs can end up doing more harm than good by using people’s personal data to their disadvantage.

WHAT IS HAPPENING?

The beneficiaries of social protection schemes include marginalized populations more often than not, who are in need of some kind of help. Being at a disadvantage, they are not in a position to question

the extent and purpose of data that is being asked of them. This collected data includes a range of information, from biometrics to social media monitoring. Then it can be shared with multiple government agencies and private stakeholders, it can be used for crime investigation, or it can even be posted online for transparency reasons.[3] This can lead to the compromisation of personal information, making beneficiaries susceptible to scams. It can also be used for crime investigation, making people who have been registered in social programs more represented in crime databases.[4]

THE PROBLEM

So, the big problem in this process that needs to be addressed globally, lies in the lack of transparency in this data collecting process that can lead to its misuse. In the following policy brief, we are going to substantiate this issue, lay out our plan of how it should be addressed and give some exemplary solutions.

#BolsaFamiliaProgram

As a concrete example we want to talk about the Bolsa Familia Program in Brazil. The Bolsa Familia Program is a social welfare program by the Brazilian government, which provides financial support for families in need. In exchange for the financial support, the beneficiaries are paying with their data, including sensible data, such as children’s vaccination cards, information about medical check-ups and reports about regular attendance at school.

The selection of families for the program is automated and based on data stored in the database.[5] That means that the decision-making, if families get financial help or not, is reliant on their data. In order to receive the benefits, the requirements of the program have to be fulfilled. But these cases ask for individual treatment or at least an intense review since algorithmic decision-making cannot take all factors into account. But not only is the decision-making based on data a problem, but also the handling of data.[6] The Bolsa Familia Law intends that the list of beneficiaries and even the particular benefit values should be publicly accessible.

Because of this disclosure of data, several incidents have already happened, such as a virus spread through WhatsApp, which offered a link that promised an extra month-pay. Another misuse happened in 2018 during the presidential election, when a candidate sent propaganda messages via WhatsApp to the beneficiaries.[7]

BIG BROTHER IS WATCHING YOUR DATA

We want to focus on global data right issues and use Brazil and India as examples for the global injustices and take possible ways to handle the issues based on experiences of a social welfare country like Austria. Austria is our case example which is addressing this issues under the EU data protection regime.

We got inspired by the „A Digital New Deal” essays and want to draw attention to the global data right issues in social programs with our pilot countries Brazil and India. Especially the global south is affected by data misue – but it is a global problem, which needs a global solution.

Here in Austria we don’t have a program like the Bolsa Familia Program, but we have the “family allowance”, which is also a financial support for families.

77 DIFFERENT PIECES OF INFORMATION

In Brazil the program is linked to certain education and health outcomes of children. Furthermore, the health of those women, who are the representatives of the family is also monitored by the program.[8] In Austria the family allowance is independent of employment or income, you don’t have to proof any education outcomes of your children or any health outcomes neither from the children nor the parents. In Brazil the representative of the family has to provide around 77 different pieces of information for the registry[9], which is a lot if you think about Austria, where you only need about 30 different information.

Private companies in Brazil have full access to the registry database, which has been used for commercial purposes in the past. In Austria no private company has any access to this data. Another huge problem in Brazil is personal information from the registry is published online. In Austria none of your personal data is published anywhere. Furthermore, the participants of the Bolsa Familia Program get monitored for what they spend their money on.[10] In Austria nobody gets monitored for which purpose they use their family allowance.

What is happening in India?

India’s „Aadhaar“ database is the largest biometric database in the world. To become part of the system, people need to confirm their identity through an iris and fingerprint scan. This system creates injustice because it discriminates against people whose fingerprints cannot be read because of hard work they are damaged or dissapeare.[11] This means that especially those people, who need help the most, will be excluded or unfairly treated.

In Austria, you don’t need to be a part of a program, where you have to scan your fingerprint or iris to get social benefits. Support is available for everyone without any kind of discrimination.

THE CHANGE

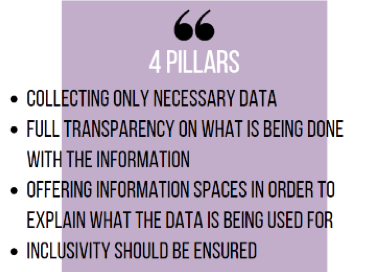

As seen, Brasil is just our case example of data misuse but the problem of it is not only in Brasil but also globally. Espacially in the global south where the collection of data brings a huge powershift to those, who hold the most data.[12] Since there still is the issue that every country regulates their data privacy differently, it is difficult to have a uniform way of processing data. In order to find a consensus, we propose that the countries participating in the UN, should adhere to some pillars. This would help the different countries have common regulation guidelines.

A first pillar would be only to collect the data that is necessary. This would avoid a misuse of information. The social programs have the information that is necessary without having additional information.

Another pillar would be full transparency. It is absolutely essential that people are informed about what information is being collected and what is being done with that information. If the information is being given to other companies, the people concerned should know why this is being done and how. If anything changes in the policy, that should be communicated.

A third pillar would be to offer information spaces for people before inscribing in a social program. This would avoid a misunderstanding between the social programs and the people in need of it. Since terms and conditions are not always clear to everyone, it is important to give an opportunity for everyone to understand what is happening. When accessing those information spaces, one should be made aware of which information is being used, how and to which purpose. This is essential when supporting the full transparency, so everyone understands what the data is being processed for.

Lastly inclusivity should be ensured. By analyzing the issues of the social programs and how some aspects actually promote discrimination. For instance, as talked about earlier, the Aadhaar biometric database does not ensure easy access to welfare for everyone. The purpose however is easier access, but it is simply not accessible for everyone. So, it should be a priority to not exclude people, if the intention is to help exactly those people.

These are a couple of pillars that, in our opinion would help, make the governments and social programs more transparent in their data collection and help avoid misuse of information.

OUTCOMES

Outcomes can be the development of changing laws and policies. Another outcome can be an administrative or regulatory change, but also the visibility of an issue and changing the general opinion around can be an outcome. Moreover we aim to bring awareness to the general public.Our plan is to help in the development of a law change in which data policy is more regulated than it is now in most countries.

POLICY PRINCIPLES

While deciding on which policy principles, we will be basing our research, we decided to use the Essential Principles for Contemporary Media and Communications Policymaking as a guideline. This report points very essential points. It underlines for example, that transparency and accountability should be a given. [13] We agree with this aspect and therefore implemented this principle in our 4 pillars.

Under this point it is made clear that information should be provided, to make sure that consumers understand how information is being processed.

This goes hand in hand with the pursuit of equitable and effective policy outcomes. Here as well, the importance of public consultation and participation in the policy process is underlined.

Another important point made is the protection of personal privacy and avoiding invasive state surveillance and data misuse.

WHO IS THE AUDIENCE

Our main audience is the UN as well as local NGOs in Brazil. We want to use this cooperation with NGOs in Brazil as a pilot project to see if it works and then implement the procedure to other countries and team up with other NGOs in different countries.

The four pillars proposed earlier are general measures that should be implied globally. The audience we want to target is the population in Brazil, especially the people within the Bolsa Familia Program. Given the fact that they are already the poorest in the country it’s a problem that those people don’t know what data of them is being collected and what is done with it. It’s important to clarify their rights in order to ensure that they aren’t victims of surveillance.[14]

WHO WE ARE

We are a group from the University of Vienna, who represent the NGO ‘Data Rights’, which is a non-profit institution that protects, enforces and improves data rights.The organization protect human rights and try to stop the spread of data in exchange for gaining security. [15]

THE PLAN

We want to team up with NGOs in Brazil and try to help them to raise awareness, so that they can put their government under pressure. Due to Corona measures online solutions are needed. In order to inform about the topic and mobilize people a Social Media campaign combined with a Hashtag campaign could raise awareness. Another way is to connect with a well-known media to deal with the problem and conduct interviews or recording a short film with victims. The consequences which might occur are huge protests and resistance. In order to succeed through the actions and methods we have chosen, we will need the three types of groups, namely activism-advocacy-alliance. Although they are different in themselves, they complement each other perfectly. Activists would take direct action to achieve a political or social goal. Advocacy is expressed through a group of people who will support our cause and give voice to the problem, and the alliance are the people or a certain person who plays the role of helpers. To achieve our goals the 3A’s are of great importance. We need Activism to make a change, we do Advocacy to speak for others and take action and especially in our case we need Allyship for active work to help people who get discriminated by data.

[1] Silvia Masiero & Soumyo Das (2019). Datafying anti-poverty programmes: implications for data justice, Information, Communication & Society

[2] Mariana Valente & Nathalie Fragoso. (2020). Data Rights and Collective Needs: A New Framework for Social Protection in a Digitized World

[3] Mariana Valente & Nathalie Fragoso. (2020). Data Rights and Collective Needs: A New Framework for Social Protection in a Digitized World

[4] Mariana Valente & Nathalie Fragoso. (2020). Data Rights and Collective Needs: A New Framework for Social Protection in a Digitized World

[5] Privacy International. (2020). Brazil’s bolsa familia program: The impact on privacy rights.

[6] Mariana Valente & Nathalie Fragoso. (2020). Data Rights and Collective Needs: A New Framework for Social Protection in a Digitized World.

[7] Privacy International. (2020). Brazil’s bolsa familia program: The impact on privacy rights.

[8] Privacy International. (2020). Brazil’s bolsa familia program: The impact on privacy rights.

[9] Mariana Valente & Nathalie Fragoso. (2020). Data Rights and Collective Needs: A New Framework for Social Protection in a Digitized World.

[10] Privacy International. (2020). Brazil’s bolsa familia program: The impact on privacy rights.

[11] Pallavi Polanki. (2020). India’s vanishing fingerprints put UID in question

[12] Linnet Taylor & Dennis Broeders. (2015) In the name of Development: Power, profit and the datafication.

[13] Valente & Fragoso (2021): Data rights and collective needs

[14] Picard & Pickard. (2017): Essential Principles for Contemporary Media and Communications Policymaking

[15] NGO“Data Rights“(2020) https://datarights.ngo/